Being Alive: A Brief History of the Idea of AI

By Rebecca Nesvet

Someone to crowd you with love

Someone to force you to care

Someone to make you come through

Who’ll always be there

As frightened as you of being alive

Being alive

Being alive

Being alive!

—Stephen Sondheim, “Being Alive,” from Company (1983)

Artificial Intelligence (AI) is an idea, not a technology. People have been dreaming about that idea for some time. This is a brief history of that idea in the modern Anglophone world. I am not an AI scientist, or any kind of scientist, but I have read a little bit about the history of AI, and I will try to share it here. At least, I will try to do a better job of summarizing some of its landmarks than ChatGPT would. The Industrial Revolution of the late eighteenth century made it seem as if, technologically, anything was possible. Steam engines vastly enhanced human power. Jacquard looms printed fabrics in elaborate patterns, seemingly by having and deploying memory. A fascination of dilettantes and absolute rulers in this era was the “automaton”: a machine, usually shaped like a human or an animal, that could perform bodily functions, creative tasks, or even, seemingly, think and feel.

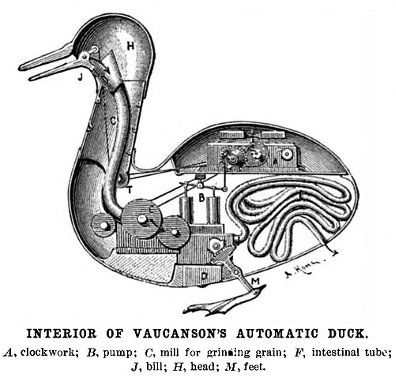

Vaucanson’s Duck. Wikimedia Commons.

In this vein, inventors such as Jacques de Vaucanson (1709-1782) and Pierre Jacquet-Droz (1721-1790) and his sons built such automata, often for the kings of France’s pampered, derelict, and soon-to-be-abolished Bourbon dynasty. Jacquet-Droz’s automaton secretary and flute player are uncanny ancestors of modern toys such as Teddy Ruxpin. Vaucanson’s Duck was more ingenious, seeming to eat and defecate, which Vaucanson’s Bourbon patron considered proof of its sentience. After all, for a regime that assumed that the French peasantry, enslaved people of African descent, indigenous people, and women were all incapable of logical thinking and undeserving of autonomy, why would an “automaton” do more to prove itself alive than eat and defecate? There were several automaton hoaxes that tried to convince gullible European publics that automata could think and make choices. One was the “Mechanical Turk,” which seemed to play chess, but turned out to involve a human, probably with dwarfism or a child, hiding under a tablecloth or inside a box. (“Pay no attention to the man behind the curtain!” over 100 years before The Wizard of Oz!) Another was “Prosopographus,” an automaton artist that, according to advertisements, drew and cut out silhouette portraits in about 1820 through the 1850s, when it was destroyed. “Prosopographus’s” marketers claimed that it would put human artists out of business by doing a better job than them. An engineering periodical published the suspicion that like the Mechanical Turk, Prosopographus operated by human cheating. Fast forward to the Atomic Age, when the search for artificial intelligence, buoyed by arms races and despair at how organic human intelligence was turning out, took on new urgency. British mathematician Alan Turing, decoder of Nazi encryption machines and father of computer science, developed the “Turing Test” (1950): a human-administered test to determine if an AI system’s output could be indistinguishable from human communication. You can see the Turing Test in action in the first scene of Ridley Scott’s cult 1982 science fiction film Blade Runner, in which an automaton (ironically, played by a human actor) fails a Turing-style “Voight-Kampff Test” conducted by an employee of the automaton-peddling Tyrell Corporation. Sadly,Turing died too soon, in 1954, of suicide after he was chemically castrated and probably given cancer by a British government obsessed with policing (his) homosexuality.

The Turing-style “Voight-Kampff” test conducted in Blade Runner.

The following year, at the Dartmouth Summer Research Project on Artificial Intelligence, a team of four Americans, led by John McCarthy and Marvin Minsky, coined the term “artificial intelligence.” In 1959, Arthur Samuels unveiled a robot that could play chess (really, this time!) and, more importantly, could teach itself to play chess increasingly effectively. However, over-promising and high-profile technological failures destroyed investors’ confidence in the development of AI, creating a situation that lasted at least through the 1990s and came to be known as the “AI winter.” Still, some of the original thinkers kept working. One was Minsky. Unfortunately, a lot of what he wrote remained theory that delivered little practical results. Like Vaucanson, however, he attracted a wealthy and powerful patron much more interested in developing “AI” than in using his money to solve any pressing real-world problems impacting ordinary people: financier Jeffrey Epstein. In 2004, Epstein and Minsky convened the “St. Thomas Common Sense Symposium,” which brought top AI scientists from places such as MIT to meet on Epstein’s Little St. James Island for convivial conversation about how to make AI demonstrate “Common Sense,” something that Epstein, Minsky, and company evidently believed that they themselves possessed. “There was a genuine sense of excitement at this meeting,” wrote Minsky, Pushpinder Singh, and Aaron Sloman. “The participants felt that it was a rare opportunity to focus once more on the grand goal of building a human-level intelligence” (Minsky et al 122). The conference proceedings remain available to the public, for instance, courtesy of the University of California at San Diego, but the research was curtailed by, among other things, the limitations of Epstein’s attention span, Minsky’s death (2016), and the suicides of at least two participants (2006 and 2019).

Whether girls and women could also demonstrate “common sense,” or worthwhile thought, remained to be discovered by this gang of geniuses, though their proceedings do picture an unidentified female human child using building blocks alongside, apparently, her brother. In 2017-20, the AI winter effectively ended. OpenAI released its GPT-1 system in 2017. Building on Google’s development of transformer architecture in that same year, GPT-1 was the first Large Language Model (LLM): the first AI system able to develop information via prompts executed in human language. A transformer is a mechanism by which text is converted into “tokens” (more efficient items that are transferable: think money) which can then, after conversion through processes too complex to explain here, can be translated back into language. This was announced in a paper by Google titled “Attention is All You Need” (2017). OpenAI’s GPT-2 and 3 followed in 2019 and 2020, with the user-friendly, open-access (mostly) ChatGPT released in November 2022. By early 2026, ChatGPT had accrued 900 million users who used it at a minimum of every week. Meanwhile, other LLMs proliferated, including Microsoft’s CoPilot, Google’s Gemini, and Anthropic’s Claude; the latter has proven the LLM of choice of coders. While some people use LLMs by choice, generative AI (AI that generates output arguably creatively, such as LLMs) also runs in the background of our lives, at banks, hospitals, and a multitude of applications, such as (to name only a few) calorie counters, fitness trackers, plant identifiers, automated customer service chatbots, and even in medicine, particularly for diagnostics.

Is all this really intelligence? Does it work like something that is intelligent, thinking, sentient? Metabolism aside, is an LLM close to being alive? Let’s apply the Bobby Test, a variation on the Turing Test, to find out. “Bobby” is the protagonist of Stephen Sondheim’s musical Company. Written during the social turbulence of the late 1960s, Company examines the existential crises faced by young metropolitan Americans. My AI-based calorie counter app, Foodvisor, enforces accountability (“make[s] you come through.”) Can that app, or ChatGPT, or any LLM, “crowd you with love?” Some people think so, such as a woman in Japan who married an AI agent. Can AI “force you to care?” Lawsuits filed after ChatGPT and other AIs convinced adolescents to commit suicide suggest that this plateau has not yet been achieved. It seems as if AIs will now “always be there,” or at least, engaging with them is now unavoidable by anyone living in the developed world. Are they “frightened as you of being alive,” or frightened of anything at all? Not quite yet. Your game, inventors.